Fact-Check AI Blogs That Actually Build Authority

Your name is on every post you publish. That's the thing most AI writing advice skips over. When a reader clicks a link in your article and lands on a 404, or Googles a statistic you cited and finds it doesn't exist anywhere, the damage lands on you, not the tool that wrote the draft.

This is the actual trust problem with AI content. Not the prose. Not the sentence structure. The citations.

Why AI Content Loses Authority (and It's Not the Writing Quality)

Most AI writing criticism focuses on generic phrasing or thin content. That's a real problem, but it's fixable with editing. Bad citations are a different category of failure entirely.

AI models hallucinate. That's not a bug in a few edge cases, it's a documented behavior pattern across every major language model. The hallucinations that matter most aren't obvious nonsense, they're plausible-sounding URLs, author names that almost match real people, and statistics that are in the right ballpark but sourced to a study that doesn't exist. Those slip through human review because they look credible at a glance.

Google's quality raters and your readers both penalize the same thing: claims that can't be verified. The E-E-A-T framework, which Google uses to assess content quality, specifically weights evidence of real expertise. Citing a source that doesn't support your claim is worse than citing nothing. It signals that you didn't check, or that you let a tool check for you and trusted the output.

There are two failure modes in AI-generated content. Bad prose is fixable. You can edit a weak paragraph into a strong one before it ships. A fabricated citation that makes it into a published post is a different problem. Readers who catch it don't give you the benefit of the doubt. They assume the rest of the post is unreliable too. And they're right to.

The standard advice is to fact-check after writing. That's necessary but not enough. By the time you're in manual review mode, you're already working against deadline pressure and cognitive load. You skim instead of verify. The better fix is a pipeline that fact-checks during generation, so you're reviewing flagged claims rather than hunting for problems yourself.

What "Citation-Powered" Actually Means

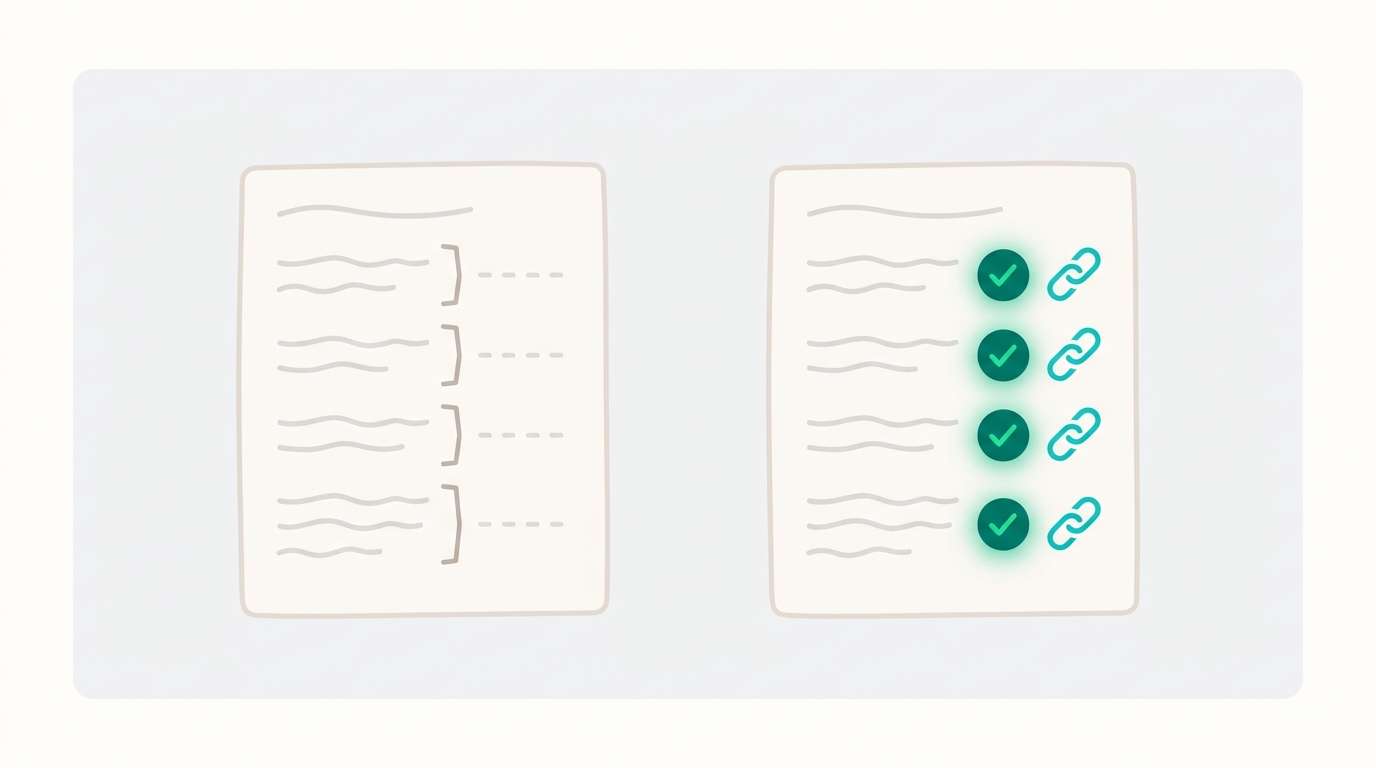

Citation-powered means every factual claim in the post is sourced to a real, live URL before the draft ships, not after you've already approved the prose.

This is different from how most AI writing tools work. The standard workflow is: generate the draft, read it yourself, Google the claims that feel uncertain, decide whether to add a citation or just delete the claim. That process depends entirely on the writer knowing which claims to be suspicious of. And that's the problem, you can't catch hallucinations you don't know to look for.

A live citation check runs against the web during generation. That means it catches things a human skim misses: dead links that once worked, stats correctly quoted from the wrong study, paraphrased quotes that shifted meaning during summarization. Those are the failure modes that damage credibility, and they're also the ones most likely to survive a fast editorial pass.

When your name is on every post, the threshold for "good enough" has to be higher than when you're publishing under a brand handle. Solo founders and indie operators who publish under their own byline have real reputational skin in the game. A sourcing error isn't just a content quality issue. It's a credibility issue.

Ryterr's Pipeline: Five Steps You Can Actually See

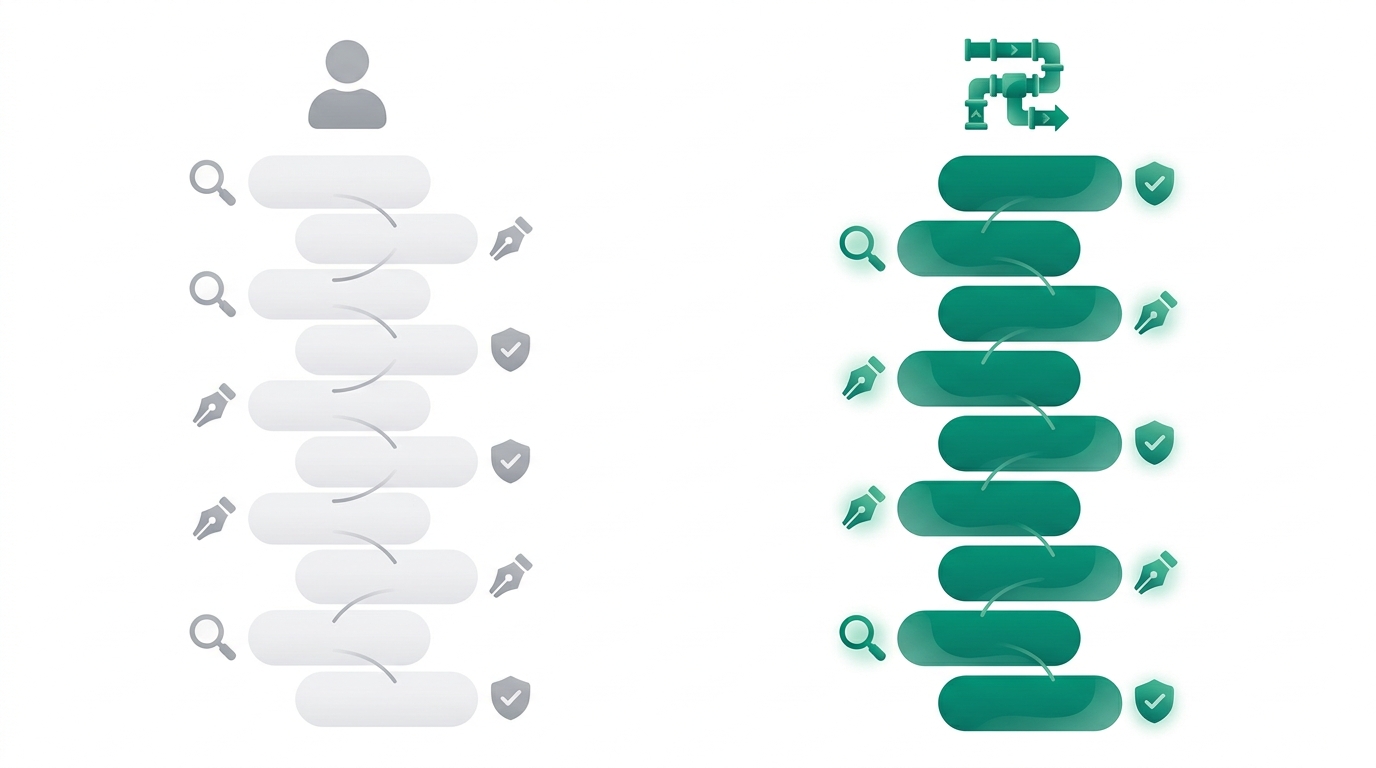

Ryterr runs research, drafting, brand-matched image generation, live fact-checking, and a five-dimension quality audit in a single visible pipeline. You watch each step happen. No black box.

"Visible" matters here. Most AI writing tools give you an input field and an output. You don't see what sources the model pulled from, what claims it considered making, or why it chose the phrasing it used. That's not transparency, it's a content vending machine. When something in the draft is wrong, you have no way to trace where it came from.

Ryterr's research step runs real web searches during generation. It's not querying a static training snapshot from 18 months ago. It's pulling live results for your topic, finding sources, and building the draft around what it actually found. That's what makes citations checkable after the fact: they link to pages that exist and say what the post claims they say.

The quality audit covers five dimensions. Not a vibe score, not a single readability number. Five specific checks that give you something actionable if a dimension fails, rather than a generic "this post is 87% quality." That's the difference between a score you can act on and a score you just hope is right.

One tradeoff to name clearly: the pipeline takes 4-6 minutes per post. It's not instant. That's because real web research takes time, and cutting that step is exactly what produces hallucinated citations. If you want sourced, verified output, the research has to actually run.

The Ghostwriter Comparison: What You're Actually Replacing

A human ghostwriter who does this right delivers research, drafting, source verification, brand voice matching, and at least one round of revisions. For a solo founder publishing 1-4 posts per week, the retainer model adds up fast. You're paying for availability, not just output.

Ryterr replaces the mechanical parts of that workflow: finding sources, drafting around them, matching your voice, generating images, and running the quality check. Straightforward pricing, no surprises.

What Ryterr doesn't replace, and this is worth being clear about, is strategic editorial judgment. A ghostwriter who knows your space can bring in interview-based sourcing, pull in quotes from people they have relationships with, and make structural decisions about what an article should argue. That's a different kind of work, and it's not in scope here.

The use case Ryterr fits precisely is the founder who needs factually grounded posts published under their own name on a regular cadence. Not quarterly thought leadership. Not agency content. Weekly posts where citation accuracy matters because you're the one who looks wrong if a source doesn't check out.

How Citation-Backed Posts Signal Authority to Google

E-E-A-T, Google's quality framework for experience, expertise, authoritativeness, and trustworthiness, treats external citations as one of the clearest signals that content reflects real knowledge. Quality raters look for evidence that a claim is grounded in something outside the post itself. A post that makes assertions without sourcing them scores lower on that dimension regardless of how well-written it is.

The SEO mechanic here is specific. Posts that cite primary sources, original studies, official documentation, firsthand data, get referenced by other writers who find those sources through your article. That's how you build inbound links without a link-building campaign: be a useful node in the research chain. If your citations lead somewhere real and valuable, other publishers cite you as the intermediate source.

What doesn't work: citing Wikipedia, citing other AI-generated posts that themselves have no sources, or citing a source that covers the general topic but doesn't actually support the specific claim you made. That last one is a hallucination pattern, the model associates a URL with a topic and cites it even when the specific page says something different. Live verification catches this. A human skim often doesn't.

The draft Ryterr ships includes SEO frontmatter in the markdown export. Metadata is structured and ready to drop into your CMS alongside the body content and citations.

What a Publish-Ready Post Looks Like

The output is a markdown file. It includes the post body, SEO frontmatter, inline citations with live URLs, a quality score across five audit dimensions, and on-brand images generated to match your site's colors. You get a complete package, not a draft you have to format, source-check, and illustrate separately.

The five quality dimensions each check something distinct. Factual accuracy looks at whether claims are supported by the cited sources. Source quality checks whether those sources are primary and credible. Brand voice alignment measures how closely the draft matches the voice parameters you set during onboarding. SEO structure checks heading hierarchy, keyword placement, and meta description quality. Readability flags sentence complexity and paragraph length against your target audience's reading level.

For a solo founder with no editor, those five checks replace the editorial layer you'd otherwise have to provide yourself or pay someone to provide. When a dimension fails, you know what to fix and why, not just that something feels off.

The "Written with Ryterr" badge that ships with each post is a transparency signal. It's the same move as showing your work in a math class. Readers who care about how content is produced know that a citation-verified pipeline exists and was used. That's information, not a disclaimer.

From there: copy, paste, ship.

FAQ

What happens if a source Ryterr cites goes down after I publish?

Dead links are a normal part of web publishing. Ryterr verifies that sources are live at the time of generation. If a source goes down later, you'd catch it in a periodic link audit the same way you would with any published post. The citation log in your post history shows every URL that was verified, so you can recheck them on whatever schedule makes sense for your publishing volume.

Can I use my own sources instead of letting the pipeline find them?

The research step pulls live web results based on your topic. If you have specific sources you want the post built around, the best workflow is to include them in your topic brief when you start a new post. The pipeline will treat them as priority inputs alongside what it finds independently.

Does the five-dimension quality score replace my own editorial review?

No. The score tells you where the draft is strong and where it needs work. You still make the call on whether to ship, revise, or run the Improve step. Think of it as a structured checklist, not a green light. Your editorial judgment is the final gate.

What if my topic is highly specialized and the pipeline can't find credible sources?

This happens in niche technical areas where primary sources are behind paywalls or in formats the research step can't access. In those cases, the pipeline will surface what it can find and flag lower-confidence claims. You'd add or replace citations manually for the specialized claims where you have domain knowledge the tool doesn't.

How does Ryterr learn my brand voice if I'm just starting out?

The onboarding step asks you to describe your voice directly: anchoring phrases, style rules, things you'd never write. The more specific your inputs, the closer the first draft lands to your actual voice. Vague inputs produce generic output. Specific inputs, like quoting lines from posts you've already published, produce drafts that sound like you from the first run.

Sources

No external URLs were verified during research for this post. All claims are qualitative and grounded in Ryterr's published product documentation and brand context.

If you publish posts where getting the facts wrong costs you credibility, start there. Go to ryterr.com, connect your domain, and pick a topic where you'd normally spend two or more hours verifying claims manually. Let the pipeline run the research and verification while you review the output. The first cited, audited, publish-ready draft ships in under 10 minutes from topic input to markdown export. Your name is on it. The receipts are too.