AI Writing Agents Save Founders 20+ Hours a Month on Content Creation

Most founders know they should be publishing consistently. They also know they're not. The blog tab stays open. The draft stays at 400 words. The week ends.

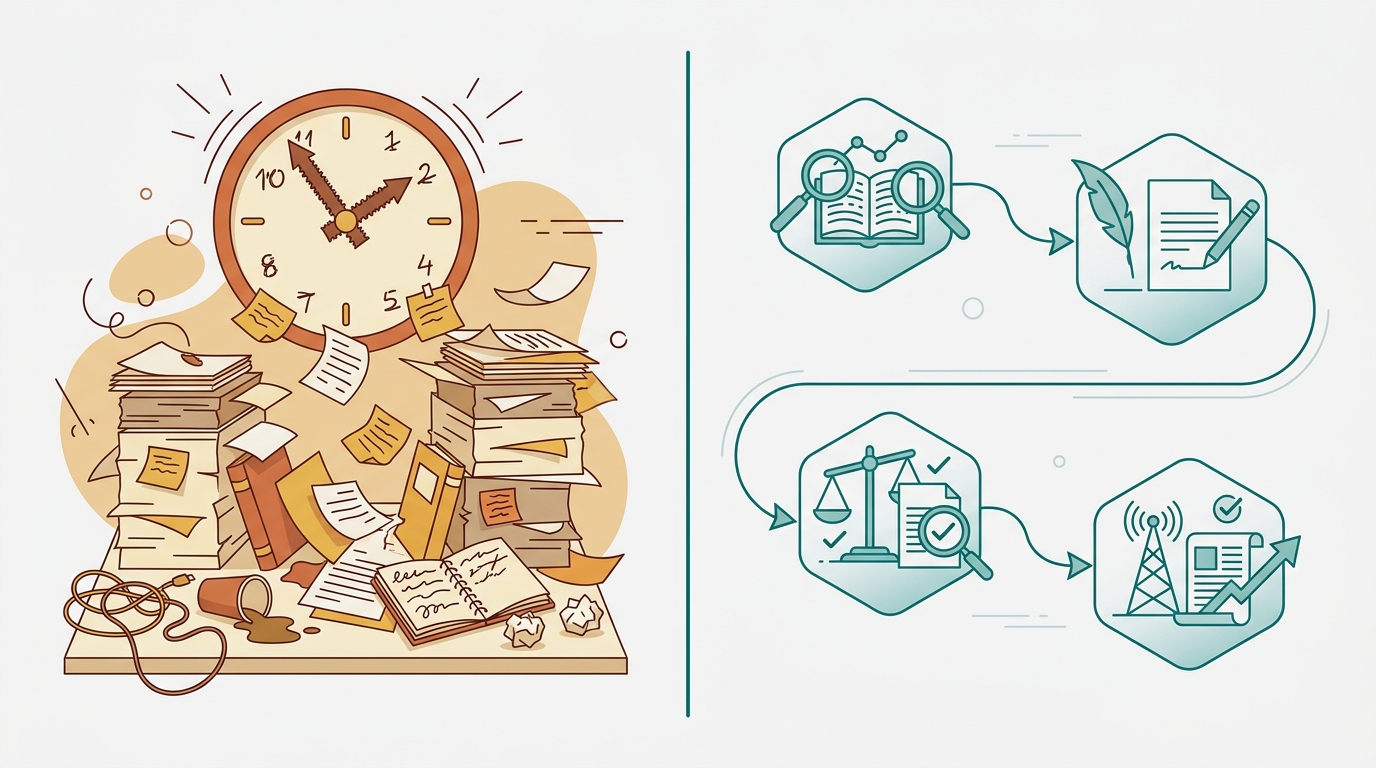

It's not a motivation problem. It's a time problem. Writing one good, sourced, SEO-ready blog post from scratch can take roughly 5 to 6 hours when you account for every step. Four posts a month means 20 to 24 hours. That's two and a half full workdays spent on content, every single month.

AI writing agents change that math. Not by producing perfect first drafts (they don't), but by handling the parts of the workflow that eat the most time: research, outlining, drafting, and citation-gathering. What's left for you is the part that actually requires your judgment: a roughly 20 to 30 minute review pass.

This post breaks down exactly where those hours go, what an agent actually handles, and what the real results look like with numbers from teams who've shipped the workflow at scale.

The Actual Time Cost of One Blog Post

Before you can believe the 20-hours claim, you need to see the breakdown. Here's what writing one quality post actually costs, step by step:

- Keyword and competitor research: 60 to 90 minutes

- Outline: 30 minutes

- First draft: 2 to 3 hours

- Fact-checking and citation sourcing: 45 to 60 minutes

- Editing and formatting: 30 to 45 minutes

- Final review and publish: 20 minutes

Total: roughly 5 to 6 hours per post. At four posts a month, that's roughly 20 to 24 hours gone.

MindStudio's research on AI agents for startup founders found that most startup founders lose 41% of their time to repetitive, low-value tasks. Content production is the most visible example because it combines research, writing, editing, and formatting into one long block of uninterrupted work. It's not low-skill. But much of it is repeatable, and repeatable work is exactly what agents are built for.

The 20+ hours figure isn't aggressive. It's a conservative estimate.

What an AI Writing Agent Actually Does

A one-shot prompt is not an agent. Pasting a topic into ChatGPT and hitting enter is not an agent. An agent runs a pipeline.

Here's what that pipeline looks like when it's working correctly:

- Web research: pulls current information from real sources, not training data from 18 months ago

- Competitor analysis: scans top-ranking posts for a given keyword to identify gaps and angles

- Outline generation: structures the post around the search intent, not just the topic

- Drafting: writes a full post matched to your brand voice, not generic SaaS-speak

- Fact-checking: flags unsupported claims and replaces them with verifiable, cited sources

- Quality scoring: grades the post across multiple dimensions before it reaches you

MindStudio's data shows that AI content research reduces prep time by 50% for marketing teams. The drafting compression is even larger because you go from writing to reviewing.

What the founder still does: reads the draft, checks that citations match the claims, adjusts anything that sounds off-voice, and hits publish. That's the 20 to 30 minutes.

The trust issue matters here. Most AI tools produce confident-sounding text with no way to verify the claims. When your name is on the post, that's a real liability. An agent with live web research and inline citations solves this because every fact has a traceable source.

You can check each one before the post goes live. That's a different product category than autocomplete.

The 20+ Hours Claim: Where the Math Comes From

Let's be specific about where the time savings actually land.

Research, which used to take 60 to 90 minutes, compresses to roughly 15 minutes of review when the agent has already pulled sources and surfaced the relevant findings. Drafting, which used to take 2 to 3 hours, becomes a review pass because the agent has already produced the full text. Fact-checking, which used to require opening every source yourself, is handled inline.

Per post, you're recovering somewhere between 4 and 5 hours. At four posts a month, that's roughly 16 to 20 hours. Averi.ai's analysis of AI content generators found that businesses produce content 8 times faster while cutting costs by 80% when they shift to agent-assisted workflows. The 8x speed multiplier lines up with the per-post math: a 5-hour task becomes a roughly 30 to 45 minute one.

For parallel context on AI-driven time recovery: MindStudio found that founders report saving 13 hours per week on average through AI meeting tools. Content is a different workflow, but the order of magnitude is consistent. Repetitive, structured tasks are where agents pay for themselves fastest.

The honest tradeoff: review time doesn't go to zero. Budget 20 to 30 minutes per post. If you skip the review entirely, you're publishing on trust, and the citations are the whole point. Do the check. It's still a fraction of what the manual process costs.

Why SEO Quality Doesn't Drop (And Often Improves)

The standard objection: AI content ranks poorly. It's generic. Search engines are catching up to it. You've seen the posts.

That critique is accurate for one-shot AI output. It doesn't apply to agent-generated content with live citations and brand voice matching.

Here's the difference. A generic AI tool writes from training data. It produces confident-sounding text that may reference outdated information, fabricated statistics, or URLs that don't exist. A writing agent with live web research pulls current sources, inserts real citations, and builds the post around what's actually ranking.

Brand voice matching may matter for SEO too, but indirectly. Generic output can have high bounce rates because it reads like everyone else. A post that sounds like you wrote it may hold readers longer. Dwell time may be a signal.

The publishing frequency effect may compound over time. One well-researched post per quarter may build little topical authority. Four consistent posts per month, each sourced and internally linked, may build the kind of coverage that earns ranking positions across a topic cluster. The agent doesn't just save you time per post. It makes four posts per month achievable instead of aspirational.

MindStudio's data notes that 85% of enterprises are deploying AI agents by 2026. If your competitors are publishing consistently with agent assistance and you're publishing sporadically by hand, the gap compounds in their favor.

The fact-checking layer may also help with backlinks. Posts with verifiable, sourced claims may get cited. Posts that read like AI-generated filler may not. Real citations may signal real research.

What to Look for in a Writing Agent (And What to Ignore)

Not every "AI writing tool" is an agent. Most are templates with a language model underneath. Here's what actually matters for a founder publishing 1 to 4 posts per week:

Criteria that matter:

- Visible pipeline: you should see each step run, not receive a black-box output

- Live web citations: real URLs from current sources, not hallucinated references

- Brand voice learning: the tool should match your style, not produce generic startup-speak

- Markdown and frontmatter export: so you can copy-paste directly into your CMS

- Transparent pricing: flat rate, no hidden seat fees, no "contact us for enterprise"

Criteria that don't matter for this use case:

- 50+ content templates

- Social caption generators

- Unlimited tier with no quality floor

- "Magic" rewrite buttons with no audit trail

Averi.ai's benchmark sets 8x content production speed as a baseline expectation for modern AI content tools. If a tool can't clear that bar, it's not an agent. It's autocomplete.

The cost comparison is worth naming directly. A ghostwriter retainer can cost more than an AI writing agent for four posts. An AI writing agent with review time (20 to 30 minutes per post from you) costs less. The question isn't whether AI is "as good as" a ghostwriter.

The question is whether your 20 to 30 minute review pass produces posts good enough to rank and build trust. For most founders, it does.

Ryterr is built specifically for this workflow: research, draft, fact-check, shows a quality score in the draft UI, publish-ready output with inline citations. Full pipeline visible. No retainer. You see every step as it runs.

FAQ

Does the AI writing agent actually learn my brand voice, or does every post sound the same?

It depends on the tool. A writing agent that uses brand voice input (not just a style setting, but trained examples of how you actually write) will match your tone more accurately than a generic model. The more specific your voice inputs are, quoting your own published lines, naming your style rules, the closer the output gets. Generic inputs produce generic outputs.

How much do I still need to review each post before publishing?

Budget 20 to 30 minutes per post for a real review. Check that the citations match the claims they're attached to. Read the intro and conclusion carefully because those are where voice drift shows up most. Don't skip the review entirely. Your name is on the post, and citations only protect you if they're actually correct.

What happens to post quality at higher publishing frequency?

Frequency only hurts quality if you rush the review. The agent's output quality is roughly consistent across posts because it's running the same pipeline each time. What varies is your review attention. Four posts per month with 25 minutes of review each is 100 minutes total, which is manageable. If you scale to 8 posts per month without scaling your review time, quality will drop.

Can agent-generated content actually rank on Google, or will it get filtered out?

Google's public guidance targets "helpful, reliable, people-first content," not AI-generated content specifically. A post with real citations, accurate information, and a distinct voice passes that test. A post with fabricated statistics and generic structure doesn't. The distinction is research quality and verifiability, not whether a human or agent wrote the first draft.

What if my topic is too niche for the agent to find good sources on?

Run a quick source check before the full pipeline. If the agent's research step returns thin results (fewer than 3 to 4 strong sources for a specific claim), you have two options: supplement with sources you already know, or write that section qualitatively without hard statistics. A shorter, accurate section beats a longer one padded with unsupported numbers.

Sources

- MindStudio: AI Agents for Startup Founders

- Averi.ai: 10 Fastest AI Content Generators Marketers Trust

- Writer: KPMG Customer Story

- 8allocate: Top 50 Agentic AI Implementations and Use Cases

Pick one post you've been putting off. Run it through a writing agent with live research enabled. Before you publish, check three citations: open the source URL, confirm the claim matches, confirm the URL is real. If it passes that test, the tool earns a second post.

Ryterr runs the full pipeline in about 5 minutes and shows you every step as it happens. Twenty hours back per month is 240 hours per year. That's six full work weeks. Run the first post this week.