How to Cite Sources in AI Blog Posts (And Actually Get Cited Back)

Many AI-generated blog posts do one of two things with citations: invent them, or skip them. Neither works. Fabricated URLs erode trust the moment a reader clicks through to a 404. Vague attribution like "according to experts" tells no one anything, including the AI engines that decide whether your content is worth surfacing.

Here's the part most founders miss: the same citation habits that make a post trustworthy to your readers can improve the chances that AI engines cite you back. This is associated with higher citation likelihood, not a guarantee.

This post gives you a concrete, repeatable framework. Source selection, recency rules, attribution formatting, post structure, and a pre-publish checklist you can run on every draft. No academic theory. Just the specific decisions that separate posts worth citing from ones that disappear.

Why Your Citations Are Probably Broken Right Now

Even frontier LLMs with retrieval tools active achieve only 39-77% factual accuracy in citations, according to a 2025 study on arxiv.org. That's not a rounding error. That's a wide range of failure, from models getting roughly two in five citations wrong at the low end.

It gets worse as you scale. The same research found that fact-check accuracy drops approximately 42% as tool calls increase from 2 to 150. More retrieval doesn't fix the underlying problem. It can make it worse.

There are two failure modes to watch for in your own posts:

- Fabricated URLs. The citation looks real. The link goes nowhere, or points to a page that doesn't contain the claim. A reader who clicks that link once won't click your next link.

- Vague attribution. "Studies show..." or "research indicates..." with no named source, no year, no URL. AI engines can't extract this. Readers can't verify it. It reads like filler because it is.

For founders, this isn't just an SEO miss. Your name is on the post. A broken citation is a trust liability that compounds over time, especially if you're trying to build a reputation in a technical niche where readers actually check.

What "Source Quality" Actually Means for a Blog Post

Not all sources are equal, and different AI engines weight them differently. There are four practical dimensions worth understanding:

- Relevance: Does the source match the keyword and intent of the claim you're making?

- Authority: Does the domain carry E-E-A-T signals? Is it a primary source or a third-party summary?

- Recency: Is the source fresh relative to the query? (More on this in the next section.)

- Variety: Are your citations spread across source types, not clustered on one domain?

That last one matters more than most people expect. A getfancy.ai analysis of AI citation behavior argues that recency and freshness matter for AI citation behavior. What ranks well in one engine doesn't automatically rank in another.

The platform bias problem is real. Research from Discovered Labs found that ChatGPT favors Wikipedia in 47.9% of its top citations, while Perplexity leans heavily on Reddit, which accounts for 46.7% of its top sources. Your citation framework can't fight these tendencies. It has to account for them.

The practical rule: for any factual claim, prefer primary sources. Original studies, official documentation, company earnings reports, government data. Cite an aggregator only when the primary source is paywalled and unavailable. When you cite a primary source, you're giving AI engines something they can directly verify.

The Recency Standard: How Old Is Too Old?

Recency isn't a single rule. It depends on what kind of claim you're making.

A 2019 study on human memory formation is probably still valid. A 2019 stat on AI adoption rates is not. The half-life of a factual claim varies by topic, and treating all sources the same way is how posts become liabilities over time.

Getfancy.ai's research suggests that recency is an important factor in AI citation behavior, with fresher content often outperforming older content on time-sensitive queries. That preference isn't arbitrary. It reflects how quickly facts in fast-moving categories become outdated.

A working ruleset, as my recommended policy:

- Statistics and market data: sources published within 18 months

- Methodology and frameworks: 36 months is acceptable if nothing newer exists

- Product comparisons: 6 months maximum

The update problem is the one most founders ignore. A post published in 2024 with accurate 2024 stats becomes a liability in 2026 when those stats have moved. Build a review cadence into your publishing workflow. At minimum, flag every post with a "review by" date when you publish it. If a stat changes and your post still ranks, you're now spreading misinformation under your own name.

Attribution Formatting That AI Engines Can Parse

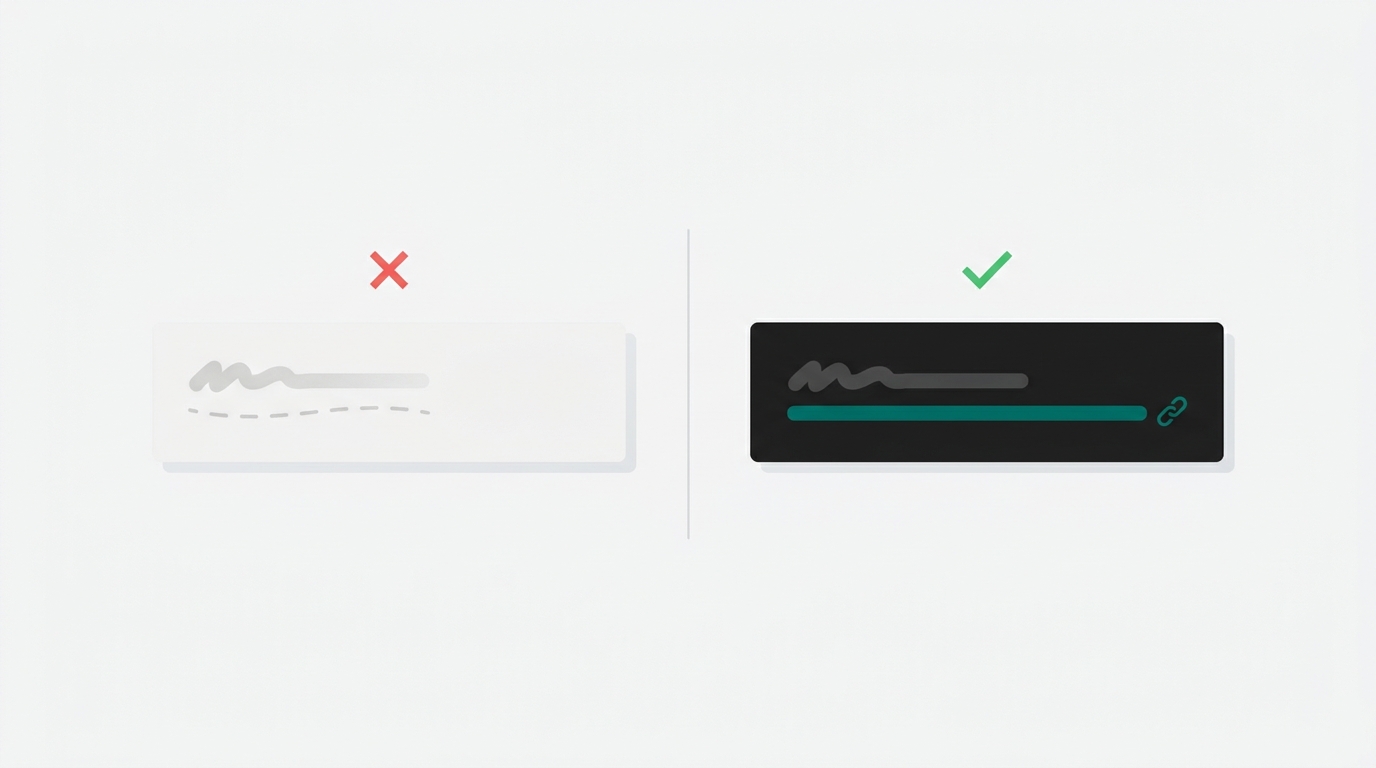

There's a clear difference between attribution an AI engine can extract and attribution it can't.

Can extract: "A 2025 Muck Rack study found that 82% of AI citations come from earned media sources."

Cannot extract: "Research shows that most citations come from third-party sources."

The second version contains no named source, no year, and no anchor for verification. Even if the underlying claim is accurate, no AI engine can trace it, and no reader can check it.

html) identifies three elements that consistently improve extractability: numbered claims, explicit attribution phrasing, and simple syntax. The same principles apply to blog posts. The format is: [Claim] + [source name] + [year] + [inline hyperlink to primary source]. Avoid footnotes for web content.

AI engines parse inline text. They don't reliably process endnotes or footnote-style attribution at the bottom of a page.

The payoff for getting this right is significant. Digitalstrategyforce.com research found that adding citations and quotations to content improved generative engine visibility by 40-115%. The format of the citation matters as much as its presence. A claim with a named source and a live hyperlink performs differently than the same claim with a vague attribution.

Where Citations Go in the Post Structure

Where you place your strongest sourced claims matters, not just whether they're present.

A CXL analysis of Google AI Overview citation behavior found that 55% of citations came from the top 30% of a page, 24% from the middle, and 21% from the bottom. AI engines are not reading your post to the end at equal weight. Front-loading matters.

The practical implication: put your most citable stat or finding in the first 300 words. Not buried in section four where it takes four minutes of scrolling to reach. If you've done the research and have a finding worth surfacing, lead with it.

There's also a distribution angle worth understanding. io/blog/machine-relations-evidence-earned-media-ai-citations) found that content distributed through third-party outlets reached a 34% citation rate, compared to 8% for brand-owned sites alone. That's a meaningful gap. It doesn't mean you should abandon your blog.

It means building a distribution layer around your content: guest posts, press mentions, syndication to relevant publications. Your blog post becomes more citable when other trusted sources point to it or republish it.

One more structural note from Yext's research on AI citation behavior: Yext found model-specific citation differences across AI platforms. Diversifying where your content lives, not just what's on your domain, increases the surface area for citation across platforms.

A Repeatable Citation Checklist for Every Post

Run this before every publish, not just the posts you think will rank.

Pre-publish citation checks:

- Every stat has a named source, a year, and a live URL

- No source older than 18 months for time-sensitive claims

- At least one primary source per major section

- Attribution phrasing names the source inline, not in a footnote

- Your strongest sourced claim appears in the first 300 words

The verification step is the one most writing tools skip: before you publish, click every citation link. Confirm it resolves. Confirm the page actually contains the claim you're attributing to it. A URL that exists but doesn't support the claim is as problematic as a broken link. It just takes longer for readers to catch it.

This is where Ryterr's pipeline differs from single-shot generation. It checks citations live during drafting, flags broken URLs, and surfaces a quality score before the post ships.

The last point is about governance. A citation framework only works if it's applied consistently. Document your standard: source age limits, acceptable source types, attribution format. Apply it to every post. The posts that don't rank still carry your name, and a single fabricated citation in a minor post can undermine trust you've built across dozens of good ones.

FAQ

Does recency matter for every type of source, or just statistics?

Recency matters most for statistics, market data, and product comparisons, where facts shift quickly. For methodology, academic frameworks, or foundational concepts, a 36-month-old source is often fine if nothing newer exists. The question to ask is: could this number have changed in the last 18 months? If yes, find a fresher source.

What if the primary source is behind a paywall?

Cite the aggregator, but be transparent. Name the original study and note that you're citing it via a secondary source. This is more honest than pretending you accessed the primary, and AI engines can still extract the attribution. Whenever possible, find a preprint, press release, or official summary from the original publisher.

Does the number of citations per post affect how AI engines treat the content?

More citations don't automatically help. What matters is citation quality and format. A single well-attributed, hyperlinked claim from a primary source outperforms five vague references to "industry research." The digitalstrategyforce.com findings on 40-115% visibility improvements were tied to citations and quotations formatted in extractable ways, not just higher citation counts.

How do I handle a stat I've seen everywhere but can't trace to an original source?

Don't use it. If you can't find the original study that produced the number, you can't verify it's accurate, current, or even real. Write the point qualitatively instead. "Many founders undercharge" is honest. "73% of founders undercharge" without a traceable source is a liability.

My post was accurate when published. How often do I actually need to update it?

For statistics and market data, review annually at minimum. For product comparisons or fast-moving categories like AI tooling, six months is more appropriate. A simple system: add a "last reviewed" date to every post and a calendar reminder to audit the citations. Posts that rank for years will accumulate readers long after the original stats expire.

Sources

- getfancy.ai: Article recency bias in AI citation

- academicseo.co.uk: How to get cited by AI

- discoveredlabs.com: AI citation patterns across ChatGPT, Claude, and Perplexity

- digitalstrategyforce.com: How AI models select sources for citation

- cxl.com: Google AI Overview citation sources

- authoritytech.io: Machine relations, evidence, and earned media for AI citations

- arxiv.org: Factual accuracy in AI citation with retrieval tools

- yext.com: AI citation behavior across models

Take your most recent published post and open every citation link. Check whether the source actually supports the claim you're making. Fix the ones that don't. If you're writing new posts and want citations checked before publish rather than after, Ryterr runs that check live during drafting, with a quality score visible before you export. Copy, paste, ship.